eXpLogic: Explaining DiffLogic Networks

🥳 Best Student Paper Award (ITNG) 🥳

Purpose: To summarize the eXpLogic toolset (see aXriv & SpringerNature) developed for the DiffLogic machine learning model. To develop a comprehensive explanation algorithm for DiffLogic networks that bridges the gap between neural network complexity and human interpretability. The eXpLogic method provides both fine-grained local explanations and higher-level functional explanations of model behavior, addressing the critical "black box" problem in deep learning while maintaining prediction accuracy. See the conference presentation below!

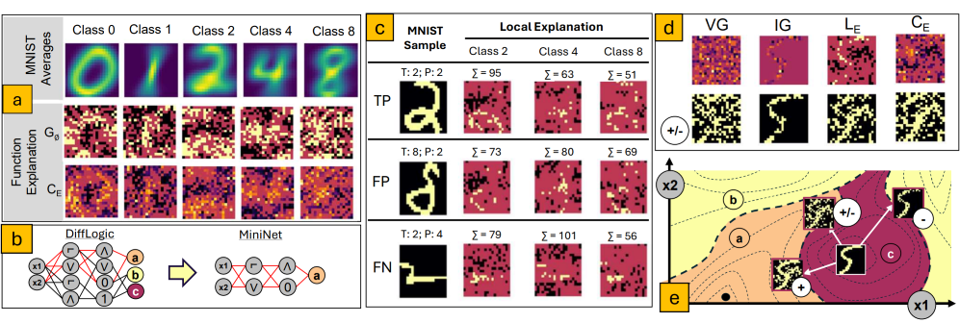

Background: DiffLogic networks employ differentiable logic operations that approximate classical logic gates within neural architectures. These networks learn by implicitly pruning "activation paths" - specific routes through learned logic gates from input to output. While powerful for prediction tasks, these models have historically lacked transparency. The eXpLogic algorithm advances existing explainability research by targeting the unique structure of logic-based neural networks, moving beyond the limitations of generic saliency methods like GradCAM, SHAP, and DeepLift that can sometimes provide misleading information.

Fig. 1: Overview of eXpLogic, showing: (a) examples of the eXpLogic function explanations, where each exhibits input patterns which influence each digit class from the MNIST dataset; (b) a notional DiffLogic architecture that predicts three classes which is juxtapozed with a MiniNet derived from the FANIN of “class a;” (c) Local explanation method showing the evidence (important pixels) that support the decisions of class 2, 4, and 8 from the MNIST dataset. Each row shows either a TP, FP, or FN prediction where the image titles show the True (T) and Predicted (P) labels for the MNIST images, and the number of important inputs (Σ) identified for each explanation. Note that Σ is not always highest for the predicted class. Light pixels represent important positive inputs, whereas dark pixels represent important zero inputs; (d) a graphical illustration of the SwithDist used to evaluate each saliency methods, where a current input, class 5 in this case, is modified in (e) three directions which either add, remove, or alter the important input pixels.

Techniques & Skills Demonstrated

- Algorithm Development (Algorithm 1) – Developed a breadth-based-search algorithm for generation local and global explanations in the DiffLogic model type

- Local Explanation Generation – Converting activation path strengths into interpretable visualizations that highlight which input features drive individual prediction

- Function Explanation Generation – Mathematical approach to distill high-level logical patterns from the network's internal representation, producing human-readable logical expressions

- Developing Explainability Metrics – Introduced a novel metric, the SwitchDist, for comparing diverse saliency explanation methods

Applications in Research & AI Development

The eXpLogic framework addresses critical needs in multiple domains:

- Regulatory Compliance – Supporting AI transparency requirements in high-stakes applications like healthcare and finance

- Model Debugging & Refinement – Identifying when models learn spurious correlations or unexpected decision patterns

- Feature Engineering – Guiding feature selection based on discovered logical relationships

- Knowledge Discovery – Extracting potentially novel patterns from data that might not be apparent through traditional analysis

- AI Safety – Verifying that models operate according to expected logical principles rather than exploiting unanticipated correlations

Summary of Findings

The eXpLogic technique successfully demonstrates how logic-based neural networks can be made transparent and interpretable without sacrificing performance. By extracting both local explanations (which inputs matter for specific predictions) and functional explanations (how these inputs combine logically), the approach provides a comprehensive view of model decision-making. The work extends beyond traditional saliency methods by accounting for the unique architecture of DiffLogic networks and addressing known limitations of existing explainability techniques, particularly their tendency to prioritize visual clarity over accuracy. This research represents a significant step toward addressing the interpretability challenges that have limited deployment of sophisticated neural networks in high-stakes environments.